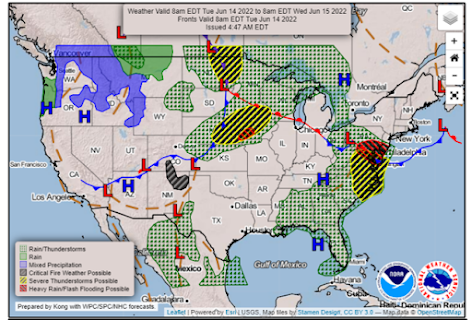

Aviation Weather Center has continuously received feedback from their pilot partners that they would like to have an easy to interpret, quick glance graphic that gives them an idea of what hazards to expect in the coming days. Testbed participants are tasked with developing sample graphics for both a “Key Aviation Message”, similar to the Key Messages developed by local forecast offices, and Days 2 and 3 outlooks for aviation hazards.

The goal of the Key Aviation Message is to communicate an impactful weather event occurring in the NAS that may cause general aviation (GA) pilots the need to alter their flight plans. These decision support graphics are intended to be sent out a couple times a day, for this experiment specifically testing once in the morning for the day of events, and a separate graphic in the afternoon or evening covering a major area of concern for the following day. A big aim for our participants this week is to help hone in on what information is important to share with pilots and how we can help local weather forecast offices (WFO) share aviation hazards. Participants reiterated the importance of using plain language and focusing on our GA pilot audience for flight levels and impacts. A couple other ideas participants noted were the importance of including a valid time or next issuance update time, and using local time vs zulu. The WFO participants mentioned sharing the graphics on their social media, in addition to AWC social media, if their area is impacted to help reach a larger audience!

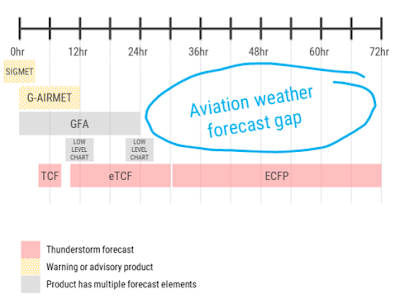

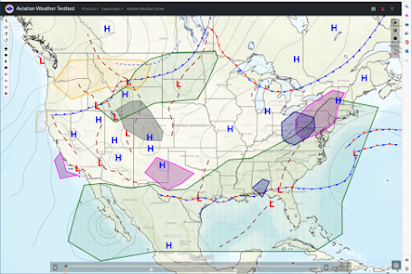

One of the most repeated requests from GA pilots is for a longer term outlook at what the weather may look like in the next couple days. AWT has taken a look at outlook graphics which cover the day 2 and 3 time frames in the past and have come up with some questions about how to show this larger temporal resolution graphic, identifying what aviation hazard criteria must be met in order to be included, and how to provide this quick glance first look. This experiment, our participants created three separate graphics to show these hazards out to day 2. The day 2 (next day) outlook was divided into a morning and afternoon/evening forecast, and day 3 covered the entirety of the daytime flying.

Guidance was provided for criteria which must be met for each aviation hazard, based on standards for GA pilots. As participants drew their outlooks, internal discussions covered the importance of having two separate day 2 graphics and how useful this additional outlook will be for GA pilots looking to plan out in the next couple days. Some ideas for AWT to further investigate include the possibility of adding text information, constraints of layer colors or outlines, or additional toggles when they are displayed on the website. As we continue to solidify how these outlooks may look, the next big step will be to get the graphic in front of users to really assess the intuitiveness and utility of the product for its intended users!